Capturing

I used qdslrdashboard for controlling the camera. Unfortunately, it stopped working after 20 minutes or so - but at least it didn't disconnect like I experienced earlier. I wondered if it has to do with Androids scheduling mechanism and that it can't run well as a background app when the screen is dark. I chose the "keep screen on" option in qdslrdashboard and that seemed to fix it - of course that also means that the battery drains so much faster.The whole capturing setup now consists of

- the camera itself

- the intervallometer (I take a photo every 30 seconds)

- an Android phone or tablet running qdslrdashboard (connected to the camera either via USB or wifi)

- a usb recharger for the Android device (which means I have to connect via wi-fi)

... oh, and the handwarmers strapped to the 14-24mm lens to avoid dew on the lens.

Once setup, it works very well and doesn't require any fiddling or such.

One issue that I encountered a couple of times was that qdslrdashboard stopped adjusting or completely disconnected from the camera (in either USB or wifi mode). I asked on the qdslrdashboard forum and the only idea was to use the "keep screen on" setting. Which works, but then the tablet/phone discharges really quickly. So, I have to use a USB recharger (and connect to the camera via wifi).

One issue that I encountered a couple of times was that qdslrdashboard stopped adjusting or completely disconnected from the camera (in either USB or wifi mode). I asked on the qdslrdashboard forum and the only idea was to use the "keep screen on" setting. Which works, but then the tablet/phone discharges really quickly. So, I have to use a USB recharger (and connect to the camera via wifi).

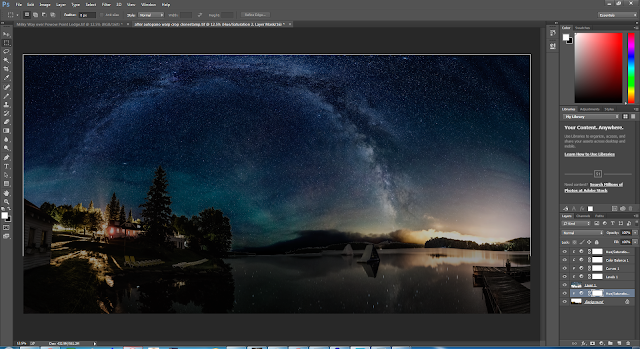

Processing

The latest version of LRTimeLapse made processing even easier. Now, you only do one pass (and not two as previously) between LRTimeLapse and Lightroom. I found that the key piece is the selection of the key frames:

- Need to make sure that I have key frames at all the "significant" moments. E.g. when the sky goes red, making sure that one key frame is in the middle of it to shift the white balance slightly to bring out the red more. LRTimelapse only allows to create n key frames at equidistant times in the video. I.e. often I have to choose to many to make sure that I have one that's at the right moment.

- At those key frames, I do careful manipulation of the images to create the transitions that I wanted. E.g. for this timelapse, these were my keyframes:

And this is how I edited them:

You can see that I changed them in a way that creates a consistent flow from bright to darker and preserve the color (in particular the blue sky).

LRTimelapse then takes these keyframes and interpolates all the images in between such that it creates a smooth transition. It also smoothes out the step function that was created when taking the images (qdsrldashboard always takes 3 images with the same exposure time and ISO and only then adjusts them if they are darker then the reference point).

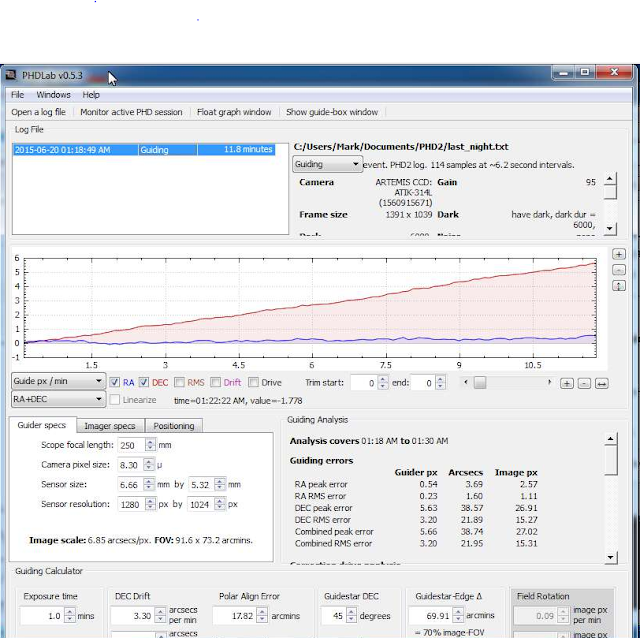

In the photo overlay, you can see the different progressions:

- dark blue: the original images (you can see the tooth saw curve, when qdslrdashboard adjusted the exposure time/ISO and you can also see that twice qdslrdashboard stopped adjusting (see above the disconnect issue)

- yellow: the corrections

- light blue: the resulting smooth progression from bright to dark.